Location: Home >> Detail

TOTAL VIEWS

J Psychiatry Brain Sci. 2026;11(2):e260005. https://doi.org/10.20900/jpbs.20260005

1 School of Health and Biomedical Sciences, Federation University, Melbourne, VIC 3000, Australia

2 Psychological & Educational Consultancy Services, Perth, WA 6008, Australia

3 Graduate School of Education, University of Western Australia, Perth, WA 6009, Australia

4 School of Health and Biomedical Sciences, Royal Melbourne Institute of Technology University, Melbourne, VIC 3000, Australia

* Correspondence: Stephen Houghton

Background: Attention deficit hyperactivity disorder (ADHD) is a neurodevelopmental disorder diagnosed through comprehensive clinical interviews, behavioural rating scales, and developmental history review. The Adult PsychProfiler (APP), a comprehensive global screening instrument for the concurrent investigation of the 17 most common DSM-5 disorders in adults, includes items for ADHD. Comprising a self-report form (APP-SRF) and an observer-report form (APP-ORF), the psychometric properties of the ADHD-IA and ADHD-HI screening scales have yet to be fully evaluated. Having valid measures for adult ADHD is important because it provides them with access to evidence-based treatments associated with improved quality of life. Such scales must be robust, however. Therefore, the aim of this study was to examine the psychometric properties of the PsychProfiler ADHD scales. Methods: The current study examined the psychometric properties of the separate ADHD screening scales for inattention [IA] and hyperactivity/impulsivity [HI] in the APP-SRF and APP-ORF with two samples of adults aged 18 years and older. The full sample (N = 257) comprised adults referred for a clinic-based ADHD assessment. Since not all of these individuals had scores necessary for an examination of the convergent validity of the ADHD factors, we examined a subsample (N = 247) for this purpose. All measures were completed using an online tool prior to attending the clinic setting. Results: Data from the full sample were analysed using confirmatory factor analysis, and the findings supported the theorized 2-factor model for both the self-report and observer report versions. Better fit was evident for the 2-factor model, than for 1-factor, 3-factor and s-1 bifactor models. Data from the subsample that examined the convergent validity of the APP-SRF and APP-ORF ADHD scales demonstrated good discriminant and convergent validity. There was also support for measurement invariance across self and observer ratings. Conclusion: The findings provide evidence for the psychometric properties of the ADHD items in the APP-SRF and APP-ORF, thereby providing a valuable measure for use by clinicians and researchers.

ADHD, Attention-Deficit/Hyperactivity Disorder; PP, PsychProfiler; CAPP, Child and Adolescent PsychProfiler; APP, Adult PsychProfiler; IA, inattention; HI, hyperactivity/impulsivity; CAARS, Conners’ Adult ADHD Rating Scale; s, self-ratings; o, observer ratings; WLSMV, mean- and variance-adjusted weighted least squares; CI, confidence interval; RMSEA, root mean square error of approximation; CFI, comparative fit index; TLI, Tucker-Lewis Index

Attention-Deficit/Hyperactivity Disorder (ADHD) is a clinically heterogeneous neurodevelopmental disorder characterised by a persistent and pervasive pattern of inattention and/or hyperactivity impulsivity that severely impairs daily functioning and significantly impacts quality of life [1]. The clinical presentation of ADHD is described as primarily inattentive, primarily hyperactive-impulsive, or combined, depending on the nature of the symptoms [2]. Although between 87% and 97% of clinicians use self-report measures in their assessment of ADHD, there is a need for systemic improvement in standardised assessments to enhance diagnostic accuracy [3].

The PP consists of two conceptually similar instruments: the Child and Adolescent PsychProfiler (CAPP) and the APP [4]. Both versions of the PP are comprehensive screening instruments for the simultaneous investigation of 17 of the most common disorders found in adults aged 18 years and older. For the APP there are two versions, one for completion by the individual (APP-SRF) and one for completion by observers, such as a parent, spouse, or close friend (APP-ORF) (see www.psychprofiler.com). Among the screening scales in the APP are Attention-Deficit/Hyperactivity Disorder Predominantly Inattentive (ADHD-IA) and Attention-Deficit/Hyperactivity Disorder Predominantly Hyperactive/Impulsive (ADHD-HI). These scales comprise the nine inattention (IA) and nine Hyperactivity/Impulsivity (HI) DSM-5 TR symptoms [2]. To date, the psychometric properties of the ADHD-IA and ADHD-HI screening scales have not been subjected to a comprehensive psychometric evaluation, despite their wide use in Australia. The major aim of the current study was to examine the psychometric properties of the ADHD-IA and ADHD-HI screening scales in the APP-SRF and APP-ORF.

According to the PP version 5 Manual, all previous versions of the PP (including the APP) have been found to be reliable and valid. While there are reports of inter-rater reliability, clinical calibration, application of a six-point scale for rating the items, and suitable readability (see [4]), evidence of factor structure, and validity remain unknown. Furthermore, there are no published data for these properties in relation to the APP-SRF and APP-ORF ADHD-IA and ADHD-HI screening scales.

As the APP-SRF and APP-ORF for the ADHD items were designed to measure ADHD as specified in the DSM-5, one major goal of the current study was to use independent cluster confirmatory factor analysis (ICM-CFA) to examine support for the DSM-5 based 2-factor ADHD models for both the APP-SRF and the APP-ORF. Related to this, we also examined the clarity of the item loadings, and the reliabilities, and convergent validities of the IA and HI factors in this model. We also examined support for proposed structural 1-factor, 3-factor, and s-1 bifactor ADHD models. In the1-factor model, all 18 ADHD symptoms load on a single ADHD factor. In the 3-factor model, all nine IA symptoms load on only the IA latent factor, the six HY symptoms load only on the HY latent factor, and the three impulsivity (IM) symptoms load on only the IM factors. Like the 2-factor model, the latent factors correlate with each other, there are no cross-loadings, all error variances are freely estimated, and all covariances between error variances are set to zero.

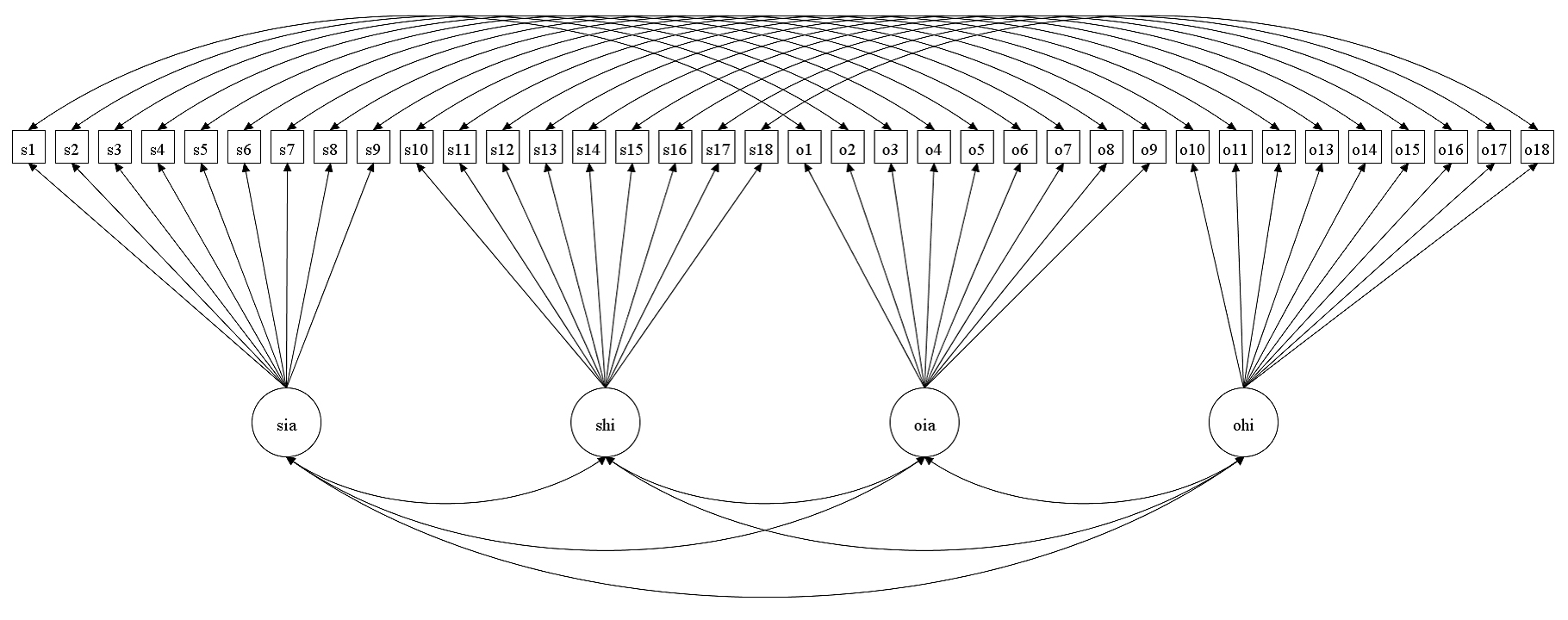

The s-1 bifactor ADHD model arose from an earlier ADHD model referred to as the bifactor ADHD model [5]. In the bifactor model, one general latent factor and specific latent factors for the ADHD IA and HI symptom groups are evident. All 18 ADHD symptoms load on the general latent factor, and symptoms belonging to each symptom group (IA and HI) load on their own specific latent factors. All the latent factors are not correlated with each other (orthogonal model). As such, the general factor represents what is common (shared variances) among all the items in the measure, and the specific factors represent variances in them that are not accounted for by the general factor. However, despite its widespread use in past studies pertaining to understanding the structure of ADHD symptoms, e.g., [6,7], it has been argued in the literature that this approach is not appropriate for doing so [1,5,8–11]. Consequently, the bi-factor S-1 model was proposed [5] for evaluating the structure of ADHD symptoms. In this model (see Figure 1), the HI symptoms (based on theory) are used as reference indicators for the g-factor. That is, the HI symptoms load only on the g-factor and do not have their own specific factor. The specific factor for IA (that is not allowed to correlate with the g-factor) is regressed on the g-factor. The resultant residual variances (i.e., true scores in the IA factor that are not shared with the g-factor) are inferred as the variances for the specific factors.

To date, many studies have examined the factor structure of ADHD symptoms in adult samples [10,12–14], but in general these studies found no support for the 1-factor model, and only adequate support for the 2-factor model. When examined, the findings showed a better fit for the 3-factor models with group factors for IA, HY, and IM [13,15].

Studies involving children and adolescents [5,16], and adults [10] have, however, shown more support for the s-1 bifactor model, with HI as the reference factor. Taking these findings into consideration, we expected that for the APP-SRF and APP-ORF ADHD-IA and ADHD-HI screening scales, there would be some support for the 2-factor model, with the factors (IA and HI) showing clarity (items loading significantly and saliently on their respective designated factors), good internal consistency, and validity (discriminant and convergent). Based on the existing literature, we also expected support for the 3- and s-1 bifactor models.

The current study also sought to examine (for the 2-factor model) measurement invariance for the APP ADHD items across self-ratings and observer-ratings. In this respect, current clinical practice guidelines suggest that the diagnostic evaluation of adult ADHD should include and integrate information (such as symptoms ratings) from self and others [17–19]. However, for this practice to be credible, consideration must be given to the measurement invariance properties of the ADHD symptoms across these respondents [20,21]. Measurement invariance (MI) refers to groups (in the current case, different respondents) reporting the same observed scores when they rate the same level of the underlying trait [20,21]. Applied to the APP-SRF and APP-ORF, measurement invariance would mean that the ADHD items in APP-SRF and APP-ORF have the same measurement and scaling properties, thereby allowing test scores from self and other to be psychometrically compared. If there is no support for measurement invariance across the groups being studied, then the results of such comparisons are confounded by differences in measurement and scaling properties. Expressed differently, the groups in question cannot be justifiably compared because the same observed scores may not reflect the same levels of the underlying latent construct being measured.

Related to this, past studies have shown that in terms of self and observer ratings for ADHD symptoms, the associations are generally moderate to poor [17,22,23], or inconclusive [24]. In part, Van Voorhees et al. (2010) [17] explained this in terms of a positive illusory bias. In brief, positive illusory bias involves an overestimation of one’s own abilities and competence, and as such, manifests as an overly positive perception of oneself either globally or in specific domains [25]. If so, it can be expected that for the same level of ADHD, individuals will underrate the severity of their own ADHD symptoms, compared to how they are likely to be rated by observers. This raises the possibility of lack of scalar invariance across self-ratings and observer-ratings. Despite the importance of measurement invariance issues when comparing self-reported ADHD information with that of observers, to date, no study has tested this. This is an important gap in our understanding of ADHD symptoms, which the current study sought to address.

There were two samples of participants involved in the research. The full sample (referred to as Sample 1) comprised 257 adults (male = 145, female = 112; age range 18 to 76 years, mean = 32.09, SD = 12.21), with APP-SRF and APP-ORF ratings for the ADHD-IA and ADHD-HI screening scales. There was no significant age difference between male and female participants, χ2(df = 1) = 0.315, ns. This sample was used to examine support for the different ADHD structural models for the ADHD items in the APP-SRF and APP-ORF ADHD-IA and ADHD-HI screening scales. In addition, the reliability (alpha and omega coefficients) and discriminant validities of the factors in the preferred ADHD model were examined along with measurement invariance across the APP-SRF and APP-ORF ADHD items.

Since not all participants in the full sample had the variables necessary for examining convergent validity, we formed a subsample of participants that also had these variables (referred to as sample 2). The variables were both the self-report (CAARS-S) and observer report (CAARS-O) forms of the Conners’ Adult ADHD Rating Scale (CAARS, [26]). Sample 2 comprised N = 247 adult participants (142 males, mean age = 31.01 years, SD = 10.63 years) and 105 females (mean age = 30.98 years, SD = 10.99 years) seen in a clinic setting in Perth, Western Australia, for an ADHD assessment. These 247 participants had self-report ratings for both the APP-SRF ADHD-IA and ADHD-HI scales and the CAARS-S. Their ages ranged from 18 to 66 years (mean age = 30.95 years, SD = 10.75 years) and there were no significant age differences, t (df = 281) = 0.101, ns. Of the 247, N = 202 also had observer-report ratings for both the APP-ORF ADHD-IA and ADHD-HI scales and the CAARS-O [26]. Their ages ranged from 18 years to 66 years (mean age = 30.78 years, SD = 10.51 years) and once again there were no significant age differences, t (df = 281) = 0.346, ns. Of those participants who also had observer-ratings, N = 121 (50.3%) were male (mean age = 30.99 years, SD = 10.55 years) and 81 (39.7%) were female (mean age = 30.47 years, SD = 10.51 years).

Regarding statistical power, we used the software provided by Soper [27] to detect the minimum sample size required for the APP-SRF/APP-ORF ADHD items for the 3-factor ADHD model, specifying anticipated effect size of 0.3, desired statistical power level of 0.8, number of latent variables of 3, number of observed variables of 18, and probability level of 0.05. The analysis recommended a minimum sample size of 137. Thus, with 257 participants in our study (sample 1), our sample size was well above the recommended minimum sample size of 137.

MaterialsParticipants in Sample 1 completed the APP-SRF and APP-ORF questionnaires (Langsford et al. (2014) [4]), which were described in the introduction. For this present study we used only the APP-SRF and APP-ORF item ratings for the ADHD-IA and ADHD-HI screening scales. All ADHD items on these are rated on a six-point Likert scale (never = 0, rarely = 2, sometimes = 2, regularly = 3, often = 4, and very often = 5). As mentioned previously, for the APP-SRF and APP-ORF, for screening disorders, all the item scores were recoded as symptom not present or symptom present, and the total scores of items within each screening scale had to meet the symptom threshold for that disorder in DSM-5. Considering this, for screening ADHD-IA and ADHD-HI, the relevant item scores were recoded as: never, rarely, sometimes = 0 (symptom not present) and regularly, often, and very often = 1 (symptom present), as proposed by the authors of the PP [4]. In addition to the APP-SRF and APP-ORF, Sample 2 participants also completed the Conners’ Adult ADHD Rating Scale for both the CAARS-S and CAARS-O [26].

Conners’ Adult ADHD Rating Scales (CAARS)The Conners’ Adult ADHD Rating Scales (CAARS) have identical versions for self-report (CAARS-S) and observer-report (CAARS-O) [26]. Both are 66-item scales and measure DSM-IV ADHD in adults aged 18 years and older. Individuals rate each item (on both scales) using a 4-point Likert scale (0 = not at all, 1 = just a little, 2 = pretty much, 3 = very much) in terms of how frequently they experience the symptoms. Higher scores indicate greater symptomology. There are subscales for Inattention/Memory problems, Hyperactivity/Restlessness, Impulsivity/Emotional Lability, Problems with Self-Concept, DSM-IV Inattentive Symptoms, DSM-IV Hyperactive-Impulsive Symptoms, DSM-IV ADHD Symptoms Total, and ADHD Index. All these scales have strong internal consistency reliabilities and convergent and discriminant validities [28,29].

ProcedureEthics approval for the research was granted by the Human Research Ethics committee of the University of Western Australia (UWA Human Research Ethics Approval Number-ROAP 2023/ET000965). Participation in the study was voluntary, and confidentiality of information was assured. The PP measures (including the APP) have a designated website (https://psychprofiler.com) that can be used by individuals interested in the online screening of DSM-5 TR disorders using any of the PP forms. The primary users are psychologists, psychiatrists, paediatricians, general practitioners, and the public. All participants were recruited from a clinic setting in Perth, Western Australia, where they had attended to complete an ADHD assessment at the request of a medical specialist, school, or parent(s). Data were gathered through an online tool prior to attendance at the clinic.

The PP, which is presented to respondents (and analysed) in English, provides participants with an option check box to give consent to their data being used for instrument validation and research purposes. To be included in this present study participants were required to have provided consent. Only consenting participants with APP ratings for the ADHD-IA and ADHD-HI items were included in Sample 1. The deidentified date and analytic code are available at: https://osf.io/qe6zs.

Data AnalysisMean and standard deviation scores, and the dispersion statistics of the 18 ADHD symptoms in the APP-SRF and APP-ORF were computed using the descriptive module in Jeffreys’ Amazing Statistics Program [30]. Mplus Version 7 [31] was used to analyse the ADHD factor models and for the CFA. Since the ADHD items were considered not to have nonnormality problems (details presented below), the goodness-of-fit of the CFA models was examined using weighted mean-and variance-adjusted weighted least squares (WLSMV) c2. To address sample size inflation issues, root mean squared error of approximation (RMSEA), the comparative fit index (CFI), the Tucker-Lewis Index (TLI), and standardized root mean residual (SRMR) were used to evaluate the goodness-of-fit of models. Hu and Bentler (1999) [32] recommend that RMSEA values close to 0.06 or below be taken as good fit, 0.07 to <0.08 as moderate fit, 0.08 to 0.10 as marginal fit, and >0.10 as poor fit. For the CFI and TLI, values of 0.95 or above indicate good model-data fit, values of 0.90 and <0.95 as marginally acceptable fit, and less than 0.90 as unacceptable fit. For SRMR, values ≤0.08 are acceptable.

Additionally, for model acceptance the loadings of the indicators in the model had to be significant and salient (>0.30) [33], and factors exhibit acceptable discriminant validity (r < 0.85) [34], and acceptable reliability omega coefficients [35]. For all correlations, effects sizes were examined using Cohen’s guidelines (small = 0.10, medium = 0.30, and large = 0.50) [36]. For the current study we used omega values of at least 0.70 as acceptable. (For those interested, we report the alpha reliability coefficients of the factors.)

Testing measurement invariance for binary scores using WLSMV estimation focused on three main steps: Configural, scalar, and at times error invariance. For dichotomous items, all thresholds are equally constrained across groups when testing invariance. Thus, testing for scalar invariance and metric invariance are merged into one step. Support for invariance at this step is assumed to reflect support for both metric and scaler invariance. Since the ratings for the APP-SRF and APP-ORF IA and HI items involve the same adult sample, their ratings lacked independence. Thus, an extended single group CFA model that included the ratings of APP-SRF and APP-ORF IA and HI items was used [37].

Figure 2 shows the path diagram of the baseline (configural) model used for evaluating invariance across self and observer ratings for the APP-SRF and APP-ORF IA and HI items for the 2-factor model. The procedure for testing invariance across the respondents involved comparing progressively more constrained models that tested for configural invariance (items’ loadings and thresholds free), metric invariance (items’ loadings restrained to be equal and thresholds free), and scalar invariance (items’ loadings and intercepts restrained to be equal). Although the various nested CFA models can be compared using the MLχ2 difference test, this was not used in the current study as ∆MLχ2 values are also inflated by large sample sizes. Instead, we used the differences in approximate fit indices (CFI and RMSEA). For this, differences to reject invariance for both factor loadings and thresholds were set at ΔCFI > 0.01, and ΔRMSEA > −0.015 [38].

Figure 2. Schematic Path Diagram shows the Baseline (configural) model used for evaluating invariance across self and observer ratings for the APP-SRF and APP-ORF. Note. The residuals are not shown in the figure. s1 to s18 are self-ratings for the ADHD symptoms, and o1 to o18 are observer-ratings for the ADHD symptoms.

Figure 2. Schematic Path Diagram shows the Baseline (configural) model used for evaluating invariance across self and observer ratings for the APP-SRF and APP-ORF. Note. The residuals are not shown in the figure. s1 to s18 are self-ratings for the ADHD symptoms, and o1 to o18 are observer-ratings for the ADHD symptoms.

For the data set applied to Sample 2 that examined the convergent validity of the APP-SRF and APP-ORF ADHD scales, only the total scales scores for the convergent variables were available. Thus, for evaluating the convergent validity of the factors in the APP-SRF and APP-ORF, Pearson’s correlations of the total scales scores in our preferred ADHD model (if possible) with the total scores in the CAARS [26] i.e., Inattention/Memory problems, Hyperactivity/Restlessness, Impulsivity/Emotional Lability, Problems with Self-Concept, DSM-IV Inattentive Symptoms, DSM-IV Hyperactive-Impulsive Symptoms, DSM-IV ADHD Symptoms Total, and ADHD Index were examined. The deidentified date and analytic code are available at: https://osf.io/qe6zs.

There were no missing values for the 18 ADHD items in the APP-SRF or APP-ORF. Table 1 presents the mean, standard deviation, and dispersion statistics of these items. The overall mean (SD) scores for the 18 items for the APP-SRF and APP-ORF were 0.398 (0.129) and 0.323 (0.132), respectively. Therefore, the participants in the study can be considered to exhibit relatively low levels of ADHD pathology.

For the APP-SRF ADHD items, skewness scores varied from −0.78 to 1.58, with no value outside the range of −3 to +3. The kurtosis scores ranged from −2.02 to 0.51, with no value outside of −10 to +10. For the APP-ORF ADHD items, skewness scores varied from −2.02 to 1.83, with no value outside of −3 to +3. The kurtosis scores ranged from −2.02 to 0.51, with no value outside of −10 to +10. Therefore, the skewness and kurtosis findings indicate a relatively normal distribution for all the ADHD items in the APP-SRF and APP-ORF.

Table 2 shows model fit values for the 1-factor, 2-factor, 3-factor, and s-1 bifactor ADHD models for the APP self-ratings and observer-ratings. As can be seen, based on guidelines proposed [32], the 2-factor model showed good fit in terms of the CFI, TLI and RMSEA values for both self-report and observer-report ratings, while the 1-factor model showed adequate fit. For self-ratings, the 3-factor model showed good fit and close to good fit in terms of CFI and TLI values, respectively; and adequate fit in terms of its RMSEA value. For observer-ratings, the 3-factor model showed adequate fit in terms of its CFI and TLI values, and poor fit in terms of its RMSEA value. For both self- and observer-ratings, the s-1 bifactor model showed good fit for all these values. Based on the criteria proposed by Chen (2007) [38] for interpreting differences between nested models (i.e., ΔCFI > −0.01, and ΔRMSEA > 0.015), for both self- and observer-ratings, the 2-factor model had better fit than the 1-factor model, and the 3-factor model. There was no difference between the 2-factor model and the s-1 bifactor model in their ∆CFI and ∆RMSEA values. In scientific explanations, the principle of parsimony favours the simpler model, when two models are comparable. The 2-factor model is a statistically simpler model. In contrast, the s-1 bifactor model lacks a specific factor for hyperactivity/impulsivity, believed to be a major symptom group for ADHD. Also, it is a more clinically meaningful model. Psychometrically, it is a more practical model in terms of scoring. Thus, the 2-factor model was considered preferable than the other models, including the s-1 bifactor model, for the ADHD items in both the APP-SRF and APP-ORF measures.

Table 3 shows the standardized factor loadings for the preferred 2-factor ADHD model for self- and observer-ratings. All loadings for both factors for self-ratings were significant (p < 0.001) and salient (p ≥ 0.03). For observer-ratings, all loadings for the IA factor were significant and salient. For HI, except for the loading of HI5 (“driven by motor”) on the HI factor, all other factor loadings on this factor were significant and salient. Overall, therefore, there was complete clarity for the factor loadings for the APP-SRF. For the APP-ORF, there was complete clarity for factor loadings for the IA factors. For the HI factor, all but one item, loaded significantly and saliently, thereby indicating reasonable (but not full clarity) for this factor in the APP-ORF.

Table 3 also includes the reliabilities (omega and alpha values) of the factors in the 2-factor ADHD model for self- and observer-ratings. All values were high, ranging from 0.87 to 0.95, indicating very good reliabilities for the IA and HI ADHD factors in the APP-SRF and APP-ORF.

Measurement Invariance Across APP-SRF and APP-ORF ADHD ItemsTable 4 shows the results for measurement invariance across APP-SRF and APP-ORF ADHD item ratings. As can be seen, the initial configural invariance model (Model C) showed an inadmissible solution as the correlation of item 2 for self-, and observer-ratings was more than one. Considering this, Model C was revised to allow this correlation to be freely estimated (Model C1). This resulted in an admissible solution. For this model, the CFI, TLI and RMSEA showed good fit. Thus, configural invariance was supported. Additional analysis indicated the full scaler invariance model (Model M) did not differ from the revised configural invariance model (Model C1) in terms of their ∆CFI and ∆RMSEA values. There was also no statistical difference between these models, [∆WLSMVχ2, (df = 18) = 22.925, ns]. Considering that the scores were binary, both metric and scalar invariance can be assumed. Error variances invariance was not tested as many methodologists consider such a test not necessary to establish measurement invariance. Overall, therefore, there was support for measurement invariance for the ADHD items across the APP-SRF and APP-ORF.

Given support for measurement invariance, we examined differences in observed scores for IA and HI total scales scores across the APP-SRF and APP-ORF. The scale scores (SD) for IA for the APP-SRF and APP-ORF were 3.81 (2.77) and 2.78 (2.74), respectively. The scale scores (SD) for HI for the APP-SRF and APP-ORF were 2.78 (2.74) and 1.94 (2.74), respectively. Paired sample t-tests indicated higher scores for the APP-SRF for both IA, t (256) = 6.509, p < 0.0001, and HI, t (256) = 5.468, p < 0.0001, scales. Based on guidelines for interpreting d effect sizes [36], the differences for both IA (d = 0.373) and HI (d = 0.306) were small.

Convergent Validities of the APP-SRF and APP-ORF IA and HI ScalesTable 5 shows the correlations of the APP IA and HI total scale scores for self and observer report with the CAARS [26] self and observer report, respectively. For self-report ratings all correlations for IA with all the CAARS-S scales were significant and positive with either moderate or large effect sizes, except for problems with self-concept. For problems with self-concept, the correlation was significant and positive, but of small effect size. The correlations for HI with all the CAARS-S scales were significant and positive with either moderate or large effect sizes.

For observer-report ratings, all correlations for IA and HI with all the CAARS-S scales were significant and positive, except for problems with self-concept. For these correlations, all were moderate or large effect sizes, except for IA with hyperactivity/restlessness which was small. The problems with self-concept total scale score were not correlated significantly with IA and HI total scale scores. Overall, these correlation findings can be interpreted as providing sufficient support for the construct validity of the APP-SRF and APP-ORF IA and HI scales. Notwithstanding this, the findings limit the strength of conclusions regarding screening utility.

The APP [4] has parallel versions for self-report (APP-SRF) and observer-report (APP-ORF) for the screening of 17 common DSM-5 TR disorders in adults, including screening scales for DSM-5 TR IA and HI ADHD. The current study examined the psychometric properties of these ADHD scales. A CFA supported the theorized 2-factor model, with this model showing better fit than 1-factor, 3-factor and s-1 bifactor models. The factors in the 2-factor model also showed clarity, good reliability (alpha and omega coefficients), and good discriminant and convergent validity. There was also support for measurement invariance across self and observer ratings, indicating sound psychometric properties for the ADHD items in the APP-SRF and APP-ORF. These findings have theoretical, clinical, and practical implications in relation to adult ADHD, and the utilisation of the APP-SRF and APP-ORF for screening ADHD in adults.

First, the support for the 2-factor ADHD model is consistent with existing findings [15,39]. The study also supported 3-factor and s-1 bifactor models, which is consistent with existing findings [13,15,39]. However, unlike existing studies that have demonstrated more support for the 3-factor and s-1 bifactor models than the 2-factor ADHD model [10,13,15,39], the current study found more support for the 2-factor ADHD model. At a practical level, these findings indicate the IA and HI screening scales of the APP-SRF and APP-ORF are suitable for screening DSM-5 TR IA and HY symptoms, respectively. More broadly, our findings provide most support for the way that ADHD symptoms are grouped in DSM-5 TR.

Second, the support found for measurement invariance for ADHD symptoms rated by self and observers indicate that the ADHD items in the APP-SRF and APP-ORF have the same measurement and scaling properties. Because the same observed scores in the APP-SRF and APP-ORF reflect the same levels of the underlying latent construct being measured their test scores are psychometrically comparable. This is important because it means the APP-SRF and APP-ORF can be used directly to implement current clinical practice guidelines that recommend diagnostic evaluation of adult ADHD should also include and integrate ADHD symptom information from both self and observers [40]. As our findings support full measurement invariance in terms of configural, metric, scalar invariance, there is equivalency for ratings provided by self and observer in terms of the basic organization of the ADHD items and constructs i.e., two factors with nine items in each factor (configural invariance), similarity in how the items are associated to their latent factor (metric invariance), and endorsement of the same observed scores for the same level of the latent sores (scalar invariance).

Theoretically, the support for measurement invariance is inconsistent with the notion that the low associations of self and observer ratings for ADHD symptoms in adults [17,22,23,41] could be related to a positive illusory bias (i.e., bias involving an overestimation of one’s own abilities and competence) [17]. Although not found in the present study, positive illusory bias has been known to contribute to scalar non-invariance, with low scores reported by the self, compared to observers.

Third, our findings supporting the convergent validity of the APP-SRF and APP-ORF ADHD scales in terms of their associations with virtually all the scale scores (the exception being problems with self-concept) of the CAAR-S and CAARS-O are consistent with existing data [28,29]. Our findings indicate the APP-SRF and APP-ORF IA were associated with strong sizes with the CAARS-S and CAARS-O respectively, for inattention/memory problems, inattentive symptoms; and moderate effect sizes with hyperactivity/restlessness, impulsivity/emotional lability, and hyperactive/impulsive symptoms. In contrast, APP-SRF and APP-ORF HI were associated with moderate effect sizes with inattention/memory problems, inattentive symptoms; and strong effect sizes with hyperactivity/restlessness, impulsivity/emotional lability, and hyperactive/impulsive symptoms. These findings provide differential validity for the APP-SRF and APP-ORF IA and HI scales. The CAARS-S and CAARS-O “problems with self-concept” had weak or no association with the APP-SRF and APP-ORF ADHD scales. Thus, the problems with self-concept scales in the CAARS-S and CAARS-O may be of little relevance in understanding adult ADHD.

In summary, our findings support the psychometric properties of the APP-SRF and APP-ORF IA and HI screening scales for use with adults. Importantly, as the ADHD items in these scales correspond to the symptoms proposed for the different presentations of ADHD in the DSM-5 TR, it can be assumed that the APP-SRF and APP-ORF IA and HI screening scales are suitable for adult screening of DSM-5 TR ADHD.

Study LimitationsAlthough we found comparable fit for the 2-factor and s-1 bifactor model, we adopted the 2-factor model as our preferred model on the principle of parsimony. Thus, it cannot be ruled out completely that the s-1 bifactor model may be a better model than the 2-fctor model. Clearly more studies using the ADHD items in the APP-SRF and APP-ORF are required for further clarity.

As mentioned earlier, for procedural reasons, the APP Self-Report and Observer-Report ratings were dichotomous. It has been argued that such practice can result in loss of information of the scores obtained and consequently comprise the psychometric properties of a measure [42]. It is therefore conceivable that dichotomization of the APP-SRF and APP-ORF in this study could have impacted on factor structure, reliability, and model fit, thereby raising the need to be cautious when interpreting the findings in the study. Conversely, there are findings showing that dichotomization does not impact the psychometric properties of measures [43]. It would be useful to explore in future studies if this is also the case for the APP-SRF and APP-ORF.

The samples in our study included males and females across a wide age range (18 to 76 years) who had completed an ADHD assessment prior to attending a clinic setting, thus we are confident the results generalize to the wider population of adults seeking an ADHD assessment in Western Australia (WA). We are unsure, however, how the results would generalize to adults in other states of Australia and neurodiverse populations, and this is an area for future research. Some of the persons who completed our measures self-referred, and this may bias our sample thereby limiting the generalizability of our findings to other ADHD populations (e.g., health practitioner referred). There are other limitations that must be acknowledged. Despite showing scalar invariance across self- and observer-ratings, item HI5 (“driven by motor”) was significantly associated with the HI factors for self-ratings, but not for observer-ratings. In this respect, DSM-5 TR also extends this description for HI5 subsidiarily in terms of it being witnessed by observers as being ‘restless’ or ‘difficult to keep up with’. Corresponding to the focal description of the symptoms provided in DSM-5 TR, in both the APP-ORF and APP-SRF, this symptom (HI5) is specified in terms of being often ‘on the go’ and acting as if ‘driven by a motor’. It is conceivable that while the focal description of this symptom might be adequate for self-ratings, the focal description and/or the subsidiary description may also be needed for observer-ratings. Clinicians and researchers should keep this in mind when using the ADHD items in the APP, and for future revisions of the ADHD items in the APP.

Important psychometric properties are still missing for the clinical use of the APP. Most importantly, there is a lack of data on its predictive validity. Related to this, a shortcoming is how the scores are used for screening. In the APP-SRF and APP-ORF, IA and HI screening scales are measured using items corresponding to their symptoms (including wording in most instances), as presented in DSM-5 TR. All these items are rated on a six-point Likert scale (never = 0, rarely = 2, sometimes =2, regularly = 3, often = 4, and very often = 5). For calculating screening scores, the item scores were recoded as symptom not present or symptom present, and the total scores of items within each screening scale produces a screening score for that disorder. When the screening score for a scale exceeds the screening cut-off score, the disorder that it corresponds to is considered present. Currently, the cut-off scores for each screening scale are identical with their symptom threshold cut-off scores in the DSM-5 TR (e.g., if the APP-SRF or APP-ORF IA scales has a score of 5 or more for ADHD, then IA is considered to have a positive screen, as the symptom threshold cut-off score for ADHD in adults in DSM-5 is 5). Given the close alignment of the items in the APP ADHD screening scales with the symptoms for ADHD in DSM-5 TR, this practice seems intuitively prudent. However, establishing cut-off scores for clinical disorders in screening scales is inherently more complex. Best practice standards require the application of empirically derived diagnostic utility statistics (e.g., sensitivity, specificity, positive predictive power and negative predictive power). It is important that clinicians keep this in mind when using the currently recommended cut-off scores for the APP ADHD screening scales.

Finally, although self-report such as used in the present study with the APP-SRF is an effective approach to obtaining the subjective dispositions that only individuals can report on, self-report is potentially problematic for measuring ADHD symptoms in adults as they are prone to false-positive responses [44].

Overall, this study has provided support for the psychometric properties of the APP-SRF and APP-ORF IA and HI screening scales for use with adults. Given clinical practice guidelines recommend that diagnostic evaluation of adult ADHD should also include and integrate ADHD symptom information from both self and observers, the current findings are significant. ADHD symptoms are highly context-dependent, and relying on a single perspective can lead to significant diagnostic error. The PP provides a measure that clinicians can utilise to address this. Replication of this present study with a sample of adults clinically diagnosed with ADHD is now required since the APP-SRF and APP-ORF are clinical measures, to facilitate the diagnosis of ADHD.

Informed written consent was obtained from all participants included in the study. All procedures were performed in accordance with the ethical standards of the Human Research Ethics Committee of the University of Western Australia (Protocol Number #2023/ET000965 as of 2023).

Declaration of Helsinki STROBE Reporting GuidelineThis study adhered to the Helsinki Declaration. The Strengthening the Reporting of Observational studies in Epidemiology (STROBE) recommended checklist for reporting research was followed to ensure sufficient detail, clarity, and transparency.

The deidentified date and analytic code are available at: https://osf.io/qe6zs.

RG, conceptualization, methodology, data analysis, validation, original draft writing; SL, conceptualization, methodology, data curation, review & editing; SH, conceptualization, original draft writing, review & editing; LK, data analysis, review & editing.

The authors declare that they have no conflicts of interest.

The authors did not receive any funding for the research/study presented in this article.

We are grateful to the participants who provided consent for their data to be used in future studies.

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

15.

16.

17.

18.

19.

20.

21.

22.

23.

24.

25.

26.

27.

28.

29.

30.

31.

32.

33.

34.

35.

36.

37.

38.

39.

40.

41.

42.

43.

44.

Gomez R, Langsford S, Houghton S, Karimi L. Psychometric Properties of the PsychProfiler Adult Self-Report and Observer-Report ADHD Items. J Psychiatry Brain Sci. 2026;11(2):e260005. https://doi.org/10.20900/jpbs.20260005.

Copyright © Hapres Co., Ltd. Privacy Policy | Terms and Conditions